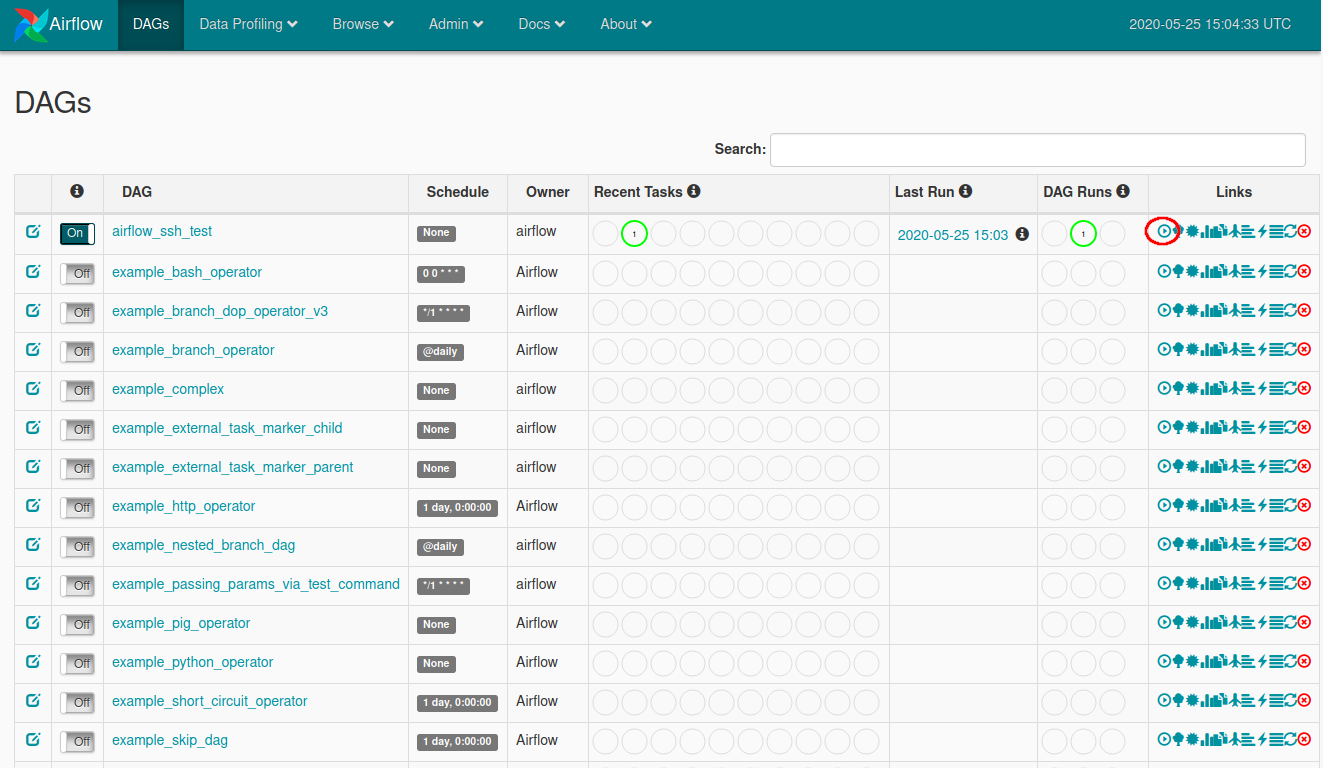

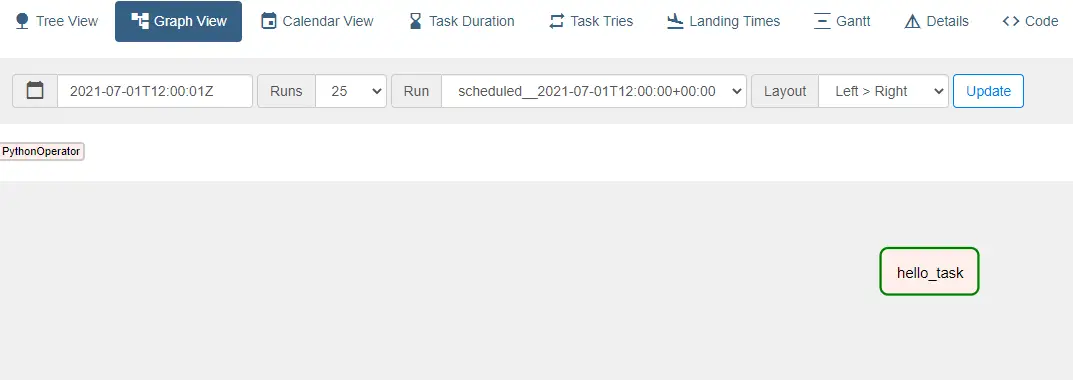

In a browser window, open The Airflow DAGs screen appears.To verify the Airflow installation, you can run one of the example DAGs included with Airflow: To run it, open a new terminal and run the following command: pipenv shell The scheduler is the Airflow component that schedules DAGs. To start the web server, open a terminal and run the following command: airflow webserver The Airflow web server is required to view the Airflow UI. Start the Airflow web server and scheduler To install extras, for example celery and password, run: pip install "apache-airflow" The SQLite database and default configuration for your Airflow deployment are initialized in the airflow directory. In a production Airflow deployment, you would configure Airflow with a standard database. Initialize a SQLite database that Airflow uses to track metadata.Airflow uses the dags directory to store DAG definitions. Install Airflow and the Airflow Databricks provider packages.Initialize an environment variable named AIRFLOW_HOME set to the path of the airflow directory.This isolation helps reduce unexpected package version mismatches and code dependency collisions.

Databricks recommends using a Python virtual environment to isolate package versions and code dependencies to that environment. Use pipenv to create and spawn a Python virtual environment.Create a directory named airflow and change into that directory.Pipenv install apache-airflow-providers-databricksĪirflow users create -username admin -firstname -lastname -role Admin -email you copy and run the script above, you perform these steps: Be sure to substitute your user name and email in the last line: mkdir airflow To install the Airflow Azure Databricks integration, open a terminal and run the following commands. Install the Airflow Azure Databricks integration The examples in this article are tested with Python 3.8.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed